And what the science — and AI — say about how to stop them.

I would leave work after a long, hard day in the city and simply have no energy to attend my GoJu Ryu Karate training. I’d call my wife, as though for permission to quit, and she would always talk me into going.

So, I did.

I would always call her back after the lesson, almost high from how awesome it felt to use my body. She knew exactly what to say to get me to go, always motivating and tailoring her advice to me.

So perhaps people quit fitness because the system they are using treats them like the average of their demographic — just a spreadsheet entry saying “Male, 28, Intermediate” — rather than a unique athlete whose capacity shifts from week to week. Life intervenes on us all, and real psychology is incompatible with rigid programming.

I work in AI Enablement by trade and have spent years designing positive feedback loops to address the gap between data and embedding behavioural change. As a Data Scientist building software, I read deeply — using my university sign-on — to discover some of the scientific principles the fitness industry consistently ignores when designing software for athletes.

Here are the top six and how I programmed for them in The Iron Church

1. The MEV–MRV Corridor Is Personal, Not Universal

Minimum Effective Volume (MEV), which is the smallest amount of training that produces results, and Maximum Recoverable Volume (MRV), which is the most training you can recover from, are not fixed numbers. They’re dynamic, individual ranges that change with elements like sleep quality, training age, stress, and mesocycle stage. Israetel et al. (2019) showed that MRV can vary for the same athlete from Week 1 to Week 4, yet most programmes ignore this.

What this means in practice: a programme that starts you at your Week-4 capacity will immediately overtrain you. A programme stuck at Week-1 capacity will bore you into maintenance. Neither produces results.

The only honest answer is progressive volume titration: start near MEV, add volume week-on-week, and monitor fatigue markers to know when you’ve hit your ceiling. Doing this manually is genuinely hard. Doing it automatically on a per-muscle-group, per-session basis is a software problem.

Iron Church AI Implementation — MEV/MRV Management

Weekly volume per muscle group is tracked deterministically — not by the AI. Every exercise in the database carries a muscle-group tag and a sets-equivalent weight; after each logged session, a calculation updates a per-muscle register. Separately, at session check-in, the athlete rates sleep quality, energy, and stress on three emoji sliders. These produce a composite readiness score (0–100) that maps to a zone (Green / Amber / Orange / Red) and a pre-computed directive string.

The AI receives both the fatigue heatmap and the directive as injected context. It does not compute volume totals or decide when to deload. Deload triggers are set by a deterministic five-signal engine. The AI executes the verdict; it does not produce it. The volume state is stored in the app database, not in the LLM context, which means no context rot and no drift. Each session the AI re-reads the state and reasons forward from current conditions, not from a degrading conversation history.

2. Fatigue Accumulates at the Muscle Level, Not the Session Level

The truth is that the catchy “full-body” TikTok programme you saw, that looks balanced, actually hammers your posterior chain three times a week, because the coach counted “sessions” rather than sets per muscle group per week.

Meta-analyses on training frequency (Schoenfeld, Ogborn & Krieger, 2016) show that how often you train doesn’t matter if the total amount of work is equal. The key variable is the number of sets you do per muscle group per week, not the number of days or sessions.

Any system that doesn’t track this will eventually create imbalances. And it won’t know to pull back on quad volume the week after you ran a half-marathon, because it doesn’t know you ran a half-marathon.

Iron Church AI Implementation — Muscle-Level Tracking

Every exercise in the database is tagged with primary and secondary muscle groups and an estimated sets-equivalent weight. After each session is logged, a deterministic calculation (not AI, as this is exactly the kind of problem that should not be given to an LLM) updates a per-muscle-group volume register. The AI is then called with the full register visible in its prompt. If a user logs “ran 10km” in the notes field, the AI is prompted to parse the activity and apply a quad/hamstring/calf volume penalty before generating the next session. This is a deliberately hybrid design.

3. Adherence Destroys All Other Variables

This one is so obvious it feels embarrassing to include, but the data is clear. In a meta-analysis of SDT-informed health interventions (Ntoumanis et al., 2021), the single strongest predictor of long-term adherence wasn’t programme quality, coach experience, or gym access. It was session flexibility. Which is the ability to modify a planned session without abandoning the goal.

Rigid programming creates a specific failure mode. If you miss Tuesday, you feel like you’ve broken the block, and mentally write off the week, so don’t show up on Friday either. This is not a willpower problem. It’s a design problem.

An athlete who completes 80% of a flexible programme for six months will outperform one who completes 60% of a perfect programme for three months, every time.

Iron Church AI Implementation — Dynamic Rescheduling

The app has no concept of a “failed week”. Skipped sessions do not break the mesocycle — the plan pointer stays where it is, and the next generated session is drawn from the correct position in the cycle. Attempting to retrospectively “absorb” missed volume by stacking it onto future sessions would recreate the overtraining problem the system exists to prevent. Brodin (the AI coach) is explicitly prompted never to comment negatively on a missed session; he acknowledges the current state and moves forward.

4. Specificity Requires Context, Not Just Exercise Selection

Specificity (the S in SAID — Specific Adaptation to Imposed Demands) is often described as ‘do the movements you want to improve.’ This is true, but incomplete.

It also means the rep ranges, tempos, and fatigue states must match your actual goal. Generic programmes can’t account for this. The specificity isn’t just in the movements. It’s in the accumulated context of who you are, what you’re preparing for, and where you are in the training year.

Iron Church AI Implementation — Context-Aware Programming

The session generation prompt assembles sixteen distinct context layers from Firestore on every call. Here is what specificity actually means in practice:

-

Biometrics with implied biomechanics: The AI receives age, height, weight, and sex, calculating BMI and leverage profiles. Very tall athletes receive volume adjustments due to mechanically costlier ranges of motion.

-

1RM data for weight prescription: Squat, bench, deadlift, and OHP maxes are stored. Every first-time exercise is opened at 70% of the relevant 1RM.

-

Available plates: The exact plate denominations the athlete owns. Weight prescriptions are constrained to combinations physically loadable on their bar.

-

The Bane List: Exercises the athlete has permanently banned based on preference. Never overridden.

-

Injury constraints with tiered substitution logic: A constraint parser branches by injury type before the prompt is built, producing forbidden movement categories and substitution directives.

-

A discipline-specific programming guide: Nineteen training styles, each with a complete programming ruleset.

-

Endurance athlete override: If running is included, heavy barbell compounds are forbidden, RPE is capped, and sessions are limited to 45 minutes.

-

Mesocycle week and deload state: Week 4 produces a different session than Week 2 based on the app engine’s deterministic deload trigger.

-

Long-term campaign countdown: Goal timelines (e.g., “marathon in March”) dictate the current phase: Foundation ? Development ? Peak ? Polish ? Taper.

-

Recent performance history: The AI receives the last five sessions, including a met/missed flag per set, and the AI’s previous coaching verdict to maintain continuity.

-

Muscle fatigue heatmap: A 7-day per-muscle volume register shows what is loaded, fresh, or approaching the MRV ceiling.

-

Pre-computed readiness directive: The AI receives a pre-computed instruction (e.g., “Reduce top-set intensity”) based on the daily check-in.

-

Experience level: Beginner, intermediate, and advanced athletes get different RPE ceilings, progression schemes, and note styles.

-

Planned session override: If following a pre-generated plan, the AI’s role shifts to adjusting the specific weights/volumes based on today’s fatigue and readiness.

-

Coach attitude: Blessed, Standard, or Punished modes change the linguistic register of the feedback.

All of this happens before a single exercise is named.

5. Psychological Load Is a Real Training Variable

Psychophysiological readiness is the idea that things like how hard you feel an exercise is (perceived exertion), your mood, and your overall stress level are not just psychological — they are reliable predictors of how you will perform and recover.

Lifting at an RPE 8 on a day when your stress hormones are high is different from lifting at RPE 8 on a normal, less stressful day. Practical implication: if your programme ignores self-reported readiness data, it’s missing the signal thinking it’s noise.

Iron Church AI Implementation — Readiness Check-In

At session start, the app presents three emoji-slider questions: sleep quality, energy, and stress. The composite score maps deterministically to a zone (Green, Amber, Orange, Red) and specifies exactly how the session should be modified. Deeper reflective prompts are reserved for end-of-session, when the user is in a post-exercise positive affect state.

6. Your Coach’s Personality Is a Design Variable

In 2005, Bickmore and Picard ran a randomised trial of an exercise-counselling agent. A relational group got empathetic, rapport-building interactions, while a control group got task-focused logging. The relational group scored higher on the Working Alliance Inventory and were more likely to continue long-term.

Identity attachment to a character modifies behaviour. Self-determination theory says autonomy and competence are easy to build into an app, but relatedness — the impression that something genuinely cares about you — needs a character, not just a set of features.

Iron Church AI Implementation — The Brodin Character

Brodin is a fictional coaching entity defined in the system prompt as a detailed character sheet: tone of voice (direct, low-ego, darkly funny), knowledge domain, relational style, and hard constraints (no toxic positivity). Testing involved explicitly red-teaming the character — trying to get it to break personality or give unsafe advice — and iterating until it held under pressure.

Where This Led Me

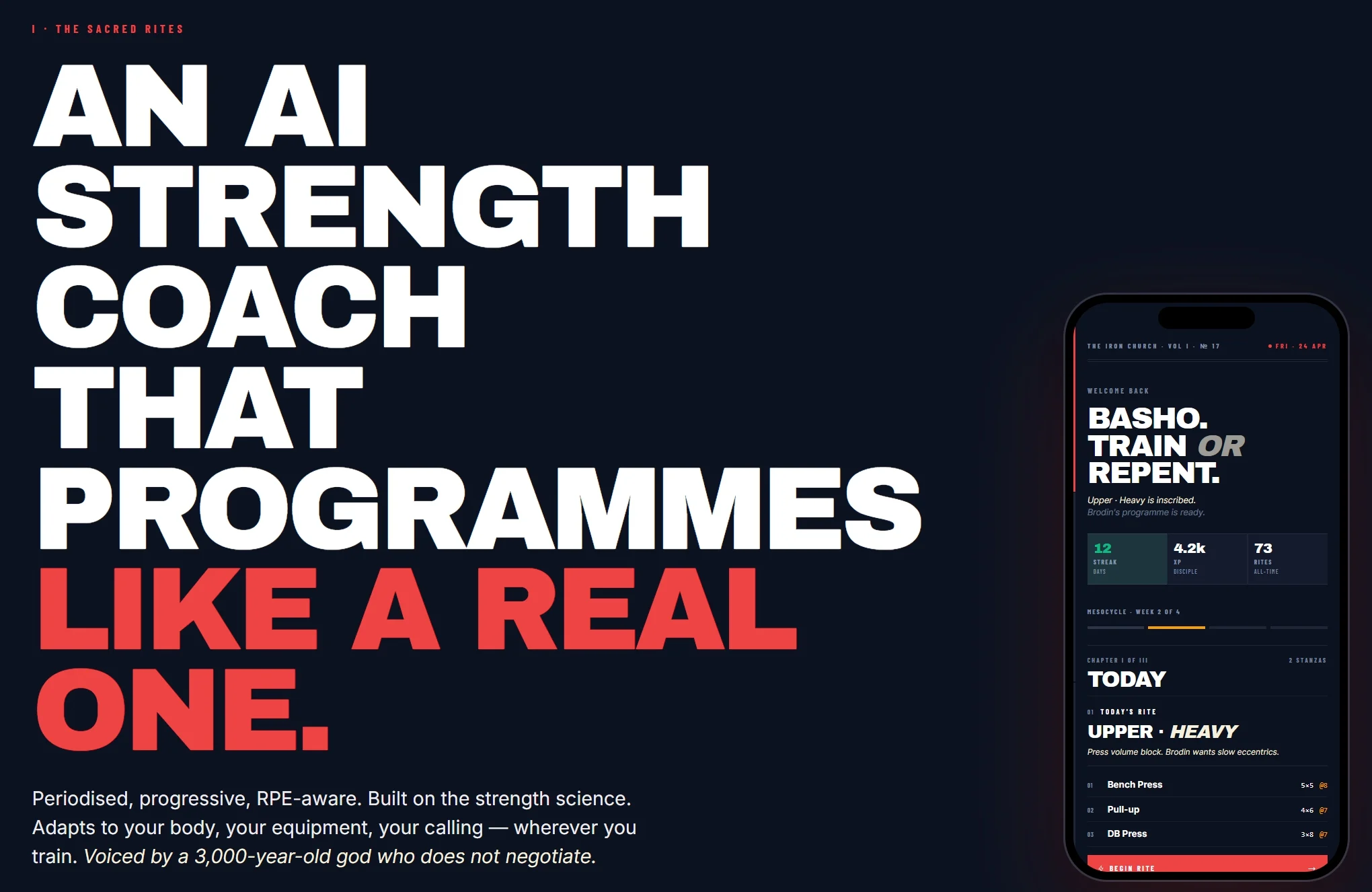

Fed up with dry, expensive programs, I experimented with AI chatbots for training — but I found the “context rot” problem impossible to get around. So, I built something to solve these problems for myself: The Iron Church, a training app that uses AI to generate personalised programming, tracks volume per muscle group, adjusts based on logged fatigue, and delivers it through a coaching character called “Brodin”.

How Rigid Programming Compares to AI-Flexible Programming

? Missed Sessions

-

Rigid: Triggers “abstinence violation effect”; user abandons week.

-

AI-Flexible: Calendar shifts dynamically; user maintains unbroken streak.

-

Rationale: Preserves psychological autonomy (SDT) and prevents total adherence collapse.

? Acute Fatigue

-

Rigid: User trains through pain to satisfy the algorithm; injury risk spikes.

-

AI-Flexible: User autoregulates load or delays session without software penalty.

-

Rationale: Reduces allostatic load and protects against connective tissue damage.

? Microcycle Length

-

Rigid: Forced into 7-day blocks, causing suboptimal recovery windows.

-

AI-Flexible: Microcycles expand fluidly (e.g., 8–10 days) based on real-world completion.

-

Rationale: Physiological recovery kinetics are non-linear; adaptation occurs independently of calendar weeks.

The question worth asking is whether your current system accounts for any of this — or whether it’s quietly optimising for compliance with a programme that was never designed for you.

AI Lessons Learned: What Building This Taught Me

Building a production AI application that actually changes behaviour required solving a different set of problems than I anticipated:

-

SEPARATE STATE FROM AI: The LLM is a reasoning engine, not a database. Persistent user state lives in structured storage.

-

DETERMINISTIC WHERE POSSIBLE, GENERATIVE WHERE NECESSARY: Volume arithmetic is deterministic. Contextual reasoning is generative.

-

STRUCTURED OUTPUT IS NON-NEGOTIABLE: Every AI call returns JSON. No prose that needs parsing.

-

THE SYSTEM PROMPT IS A DESIGN DOCUMENT: Writing Brodin’s character sheet before writing code forced clarity about what the product actually is.

-

RED-TEAM YOUR CHARACTER BEFORE YOU SHIP: Build adversarial scenarios into your QA. Try to make it break.

-

DESIGN FOR HUMAN PSYCHOLOGY, NOT AI CAPABILITY: The three-question check-in limit was a behavioural constraint, not a technical one.

-

CONTEXT ROT IS ARCHITECTURAL: Continuity comes from stored state in Firestore, not from the model remembering a conversation history.

-

TEST YOUR PROMPTS LIKE UNIT TESTS: Evaluate prompt changes against fixed test cases (edge fatigue states, missing data, adversarial inputs).

Are you building AI-powered products?

? DM me or follow for more on AI solution design, behavioural science, and building software that makes people better at things.

? Early access to The Iron Church beta ? link in profile